It’s obviously an issue that needs a fix as many people have started posting and talking about it needs a fix but a bit screwed if it’s hardware related I’m gonna see what Apple say next week if nothing is fixed then returning this handset and sticking with my S21 ultra which always gives excellent results in good light and in low light. I’m starting to think that this auto switching feature they have enabled is causing the issues as it’s forced and now way of turning it off. I’m even getting better results on my 4 year old Samsung handset, this is just shocking from Apple for the price I paid I feel like I have been sold a half baked product did they even test these cameras? Even just a normal landscape picture looks blurry, however up close I’m getting good results and portrait mode has much more detail than the standard setting on the camera. I sent them some sample pictures of how terrible these pictures come out even in good lighting there’s always a blur to them when you zoom in a little after the picture has been taken and they too agreed that they should not look like that. Pro max, I have booked to see an apple specialist through their support. The company is today releasing Lens Studio 3.2, an update to its tool that allows designers to create new Snapchat Lenses compatible with the LiDAR scanner built into the iPhone 12 Pro and 2020 iPad Pro.I’ve tried everything to fix this issue but these cameras are just rubbish my S21 ultra pics are way ahead than these new cameras on the 13

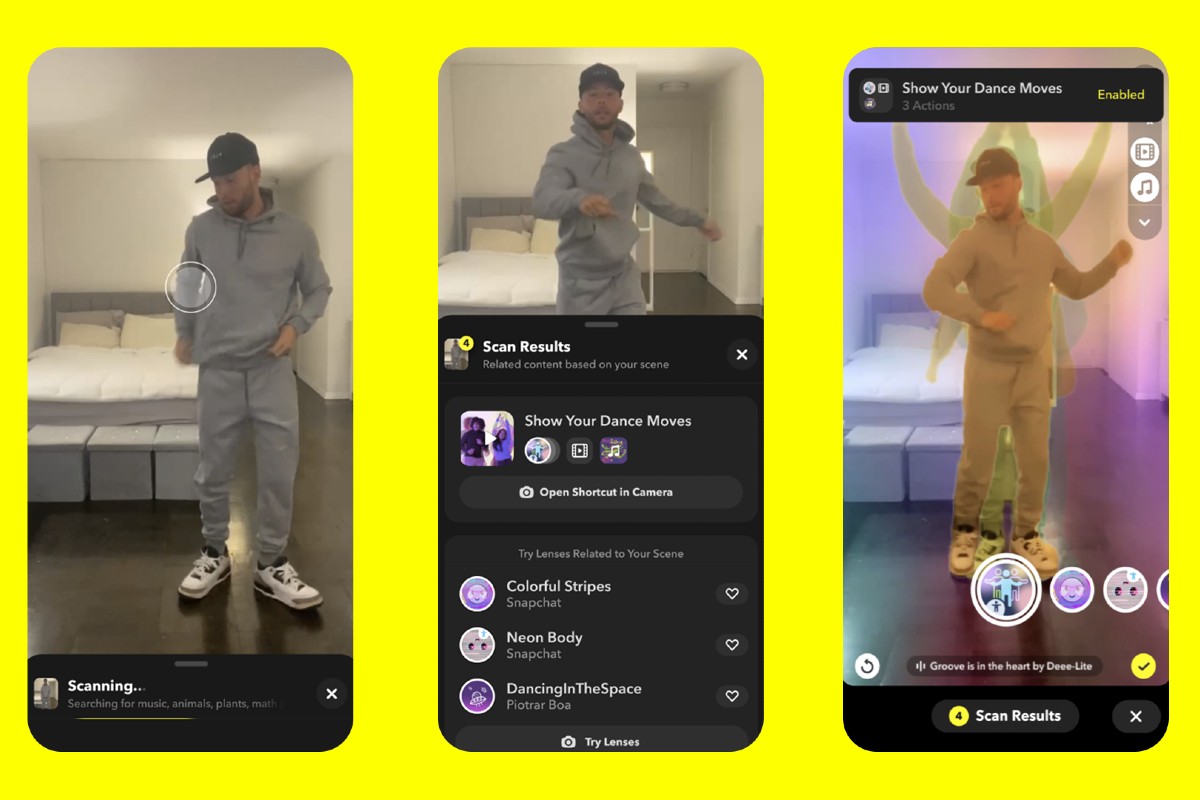

“We’re excited to collaborate with Apple to bring this sophisticated technology to our Lens Creator community.” “The addition of the LiDAR Scanner to iPhone 12 Pro models enables a new level of creativity for augmented reality,” said Eitan Pilipski, Snap’s SVP of Camera Platform. Snapchat will use this technology to offer new unique Lenses that can render thousands of AR objects in real time using iPhone 12 Pro cameras. This enables AR apps to accurately display elements in a scene as the iPhone knows exactly where each object is located and what its real sizes are. And the beams pulse in nanoseconds, constantly measuring the scene and refining the depth map. LiDAR works with the depth frameworks of iOS 14 to create a tremendous amount of high-resolution data spanning the camera’s entire field of view. The LiDAR Scanner measures absolute depth by timing how long it takes invisible light beams to travel from the transmitter to objects, then back to the receiver. Here’s how Apple describes the technology: The LiDAR scanner measures the depth of a scene by emitting lights and calculating the time taken for them to hit an object and return to the sensor. Snapchat today announced a new version of Lens Studio that lets designers create special lenses to work with the LiDAR scanner on the iPhone 12 Pro.Ī new version of the Snapchat app was quickly shown during the October Apple keynote to demonstrate the LiDAR scanner in action, and now the company has detailed how the feature will work for its users who own an iPhone 12 Pro.

As Apple introduced the iPhone 12 Pro and the iPhone 12 Pro Max with a LiDAR scanner, some developers are now taking advantage of new hardware to enhance their apps.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed